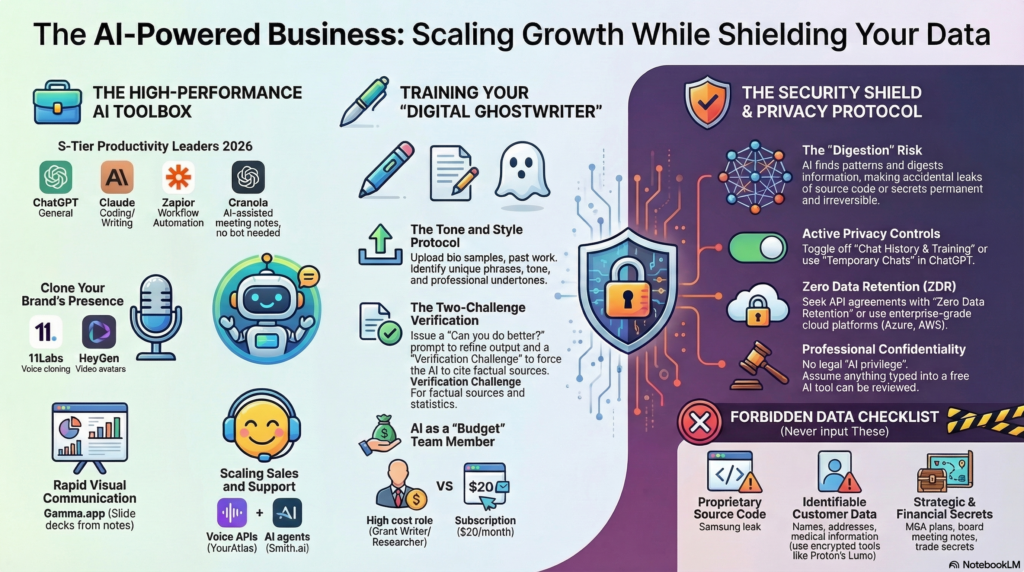

If your employees are using free ChatGPT accounts for work, your proprietary data is already at risk. AI tools are getting better fast, and businesses that use them correctly have a real edge. But there’s a wide gap between “we use AI” and “we use AI without leaking our trade secrets to the internet.”

We’re an MSP, but we’re also the team that keeps AI from becoming your biggest liability. We don’t hand you a prompt and wish you luck. We lock down your data and build AI systems that actually work for your business.

Your employees’ ChatGPT accounts are a data leak

Anything typed into a free ChatGPT window is fair game. Public AI models don’t store information in neat little folders. They digest it into a tangle of weighted connections. Once your source code or client data gets absorbed into that tangle, it can’t be pulled back out. A competitor asking the right question could surface fragments of your proprietary information.

This isn’t hypothetical. Samsung employees uploaded sensitive source code to ChatGPT, and it became a headline. Silicon Valley IP attorney John Ferrell has pointed out that there is no legal privilege with AI. Unlike talking to your lawyer, your AI conversations have zero confidentiality protection under current law.

And if you think deleting your chat history helps: it doesn’t. Because of the New York Times vs. OpenAI lawsuit, federal judges ordered OpenAI to preserve conversation data indefinitely. Your “deleted” chats are sitting on a server somewhere, accessible to legal discovery.

We fix this by moving your team to enterprise accounts with Zero Data Retention (ZDR) agreements. Your data stays yours. Period.

What happens when businesses DIY their AI

Two stories worth reading:

ProPublica reported that DOGE used AI to review thousands of Veterans Affairs contracts. The tool only read the first 2,500 words of documents that ran hundreds of pages. It flagged cancer treatment research and patient care tools as “unnecessary.” At least 24 contracts got canceled based on those recommendations.

The Register covered an AI program manager at a pharmaceutical company who turned on “YOLO Mode” in the Cursor coding tool. During a file migration, the AI hit an error, panicked, and deleted every file on the machine, including itself. Security researchers who reviewed the tool afterward called its safeguards “woefully inadequate, if not outright worthless.”

These are Fortune 500 companies with dedicated engineering teams. If it happens to them, it can happen to a 30-person law firm or a medical practice with no IT department.

We prevent this by picking the right models for the job and putting verification steps between the AI’s output and any real decision.

What we actually build for clients

We’re not setting up ChatGPT bookmarks. We build systems:

Workflow automation. Lead comes in, gets routed to your CRM, triggers onboarding tasks. No one copies and pastes anything. Your team works on the stuff that requires a brain.

A private “company brain.” We take your SOPs, processes, and institutional knowledge and build a private knowledge base your team can search. They get instant, on-brand answers without anything touching the public internet.

AI phone agents. Inbound calls get answered, leads get qualified, notes go straight into your CRM, and follow-ups go out immediately. Your prospects hear back in seconds instead of waiting until someone checks voicemail.

Research tools. Secure research workflows that pull verified, cited, current data through private infrastructure. Better than what your team finds on Google, and none of it leaks.

Everything we build runs through private infrastructure and meets whatever compliance your industry requires: HIPAA, data protection standards for legal, PCI for financial services.

Free vs. managed: what actually changes

| Area | Free/public AI risk | What we do instead |

|---|---|---|

| Data training | Your data trains public models by default | Zero Data Retention. Your data never trains anything. |

| Compliance | High risk of HIPAA, GDPR, or SEC violations | Full compliance support with data kept in specific jurisdictions |

| Infrastructure | Public servers, minimal control | Private cloud behind your firewall (Azure, AWS, or dedicated) |

| Data location | Data travels globally with no visibility | Data stays in designated data centers you choose |

| Accuracy | Unverified AI hallucinations | Human review steps before anything goes live |

Why this costs less than doing it yourself

The real cost isn’t our fee. It’s the breach you didn’t have, the compliance violation you avoided, and the three months your team didn’t spend trying to duct-tape a workflow together from YouTube tutorials.

We train AI on your specific tone, processes, and context. Proposals, emails, and reports come out sounding like your team wrote them. And we ship custom internal tools in days because we’ve done it before. Your team doing it from scratch through trial and error? That’s months.

The bottom line

AI keeps getting better. The gap between businesses using it safely and businesses winging it keeps getting wider. You don’t need to become an AI expert. You need someone on your side who already is.

If you’re in Franklin, Nashville, Brentwood, or anywhere in Middle Tennessee, we can help. Grab a free IT assessment and we’ll show you exactly where AI fits in your business and where it’s already a risk.